`

| |

|

Friday, May 18th, 2012

You might think Definition of Done (DoD) is a brilliant idea from the Agile world…but the dirty little secret is… its just a hand-over from the waterfall era.

While the DoD thought-process is helpful, it can lead to certain unwanted behavior in your team. For example:

- DoD usually ends up being a measure of output, but rarely it focuses on outcome.

- In some teams, I’ve seen it disrupt true collaboration and instead encourage more of a contractual and “cover my @ss” mentality.

- DoD creates a false-sense/illusion of doneness. Unless you have real data showing users actually benefiting and using the feature/story, how can we say its done?

- I’ve also seen teams gold-plating stuff in the name of DoD. DoD encourages a all-or-nothing approach. Teams are forced to build fully sophisticated features/stories. We might not even be sure if those features/stories are really required or not.

- It get harder to practice iterative & incremental approach to develop features. DoD does not encourage experimenting with different sophistication levels of a feature.

I would much rather prefer the team members to truly collaborate on an-ongoing basis. Build features in an iterative and incremental fashion. Strongly focus on Simplicity (maximizing the amount of work NOT done.) IME Continuous Deployment is a great practice to drive some of this behavior.

More recent blog on this: Done with Definition of Done or shoud I say Definition of Done Considered Harmful

Posted in Agile, Organizational | 4 Comments »

Friday, March 2nd, 2012

Often companies ask me:

“How do we know if this process is successful? How do we measure if this process is working for us?”

I don’t think any process by itself can make someone successful. A lot depends on the company, its values, principles, nature of business, its people and so on.

If I wanted to introduce a new process into my company, this is what I would measure or look for:

- Is it helping my product/organization? Things like

- time to market,

- frequency of releases,

- perceived quality/stability of the product and so on.

- Basically aspects about my product delivery which were good (want to continue doing them) and aspects which needs improvement.

- Is the team evolving the process? Are they internalizing the process and reducing the amount of ceremony?

- While the process should encourage people to do reflective improvement, it should also encourage some amount of disruptive changes thinking into the teams/company.

- Is the process creating growth opportunities for my people?

- I would like the process to encourage growth in terms of them becoming Generalizing specialists and not being corned into silos.

- I want my people to improve their overall understanding and involvement in the overall product development process rather than just knowing or caring about their little piece.

Also Jeff Patton has a wonderful article on Performing a simple process health checkup:

He suggests we look for the following “properties” to assess the process’ health:

- Frequent delivery

- Reflective improvement

- Close communication

- Focus

- Personal safety

- Easy access to experts

- Strong technical environment

- Sunny day visibility

- Regular cadence

I would like to add 3 more properties to this list:

- High energy

- Empowered teams

- Disruptive change or Safe-Fail Experimenting

Posted in Agile, Coaching, Organizational, Training | No Comments »

Saturday, November 12th, 2011

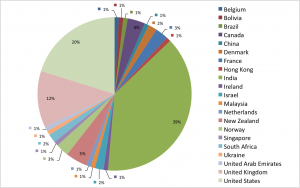

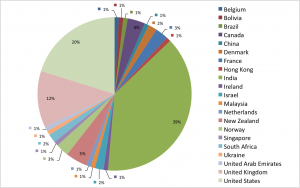

Did you know how truly diverse the Agile India 2012 conference program committee is?

That’s right! We have over 100 members from 21 countries.

Posted in Agile, agile india, Community, Conference | No Comments »

Saturday, October 22nd, 2011

To be successful in an agile environment, IMHO team members need to learn the following skills:

- Embracing uncertainty/change and finding effective ways to deal with it.

- Tight collaboration and communication with everyone involved.

- Collective Ownership, Drive and Discipline to getting things done.

- Eliminating Wasting: Mercilessly looking for waste and trying to eradicate it.

- Fail-fast: Breaking a large problem down into small safe-fail experiments and then willing to try & learn quickly.

- Systems thinking: Understanding how things influence one another within a whole system and avoiding local optimizations.

- Critical thinking: Reasonable reflective thinking focused on deciding what to believe or do. In other words; thinking about thinking.

- Open to experimenting with radical ideas

It very important for people to understand that in an agile environment, “Action Precedes Clarity!“

Posted in Agile, Organizational | 2 Comments »

Thursday, September 22nd, 2011

Gentle reminder, the early bird submission for the Agile India 2012 Conference closes on 26th Sep 2011.

Visit our submission system to get started.

Some resources to help you with your submission:

Posted in Agile, agile india, Community, Conference | No Comments »

Wednesday, June 1st, 2011

Agile gave us a wonderful head-start in a different direction than the one we’ve used to (heavy weight methods.) Personally I feel we’ve got as much value we could. Now its time to start thinking from a different direction, building on what we already know and to some extent unlearning some things we know.

Wait a second, isn’t Agile all about “Inspect and Adapt”?

There is a limit to “inspect and adapt”. If you look at the Lean movement, most people talk about Kaizen (small gradual change, change for the good, which is in-line with inspect-and-adapt). But very few people talk about Kaikaku (disruptive change or transformation).

Remember Agile was Kaikaku for most of us in late 90s. And then we’ve applied Kaizen to it for many years. IMHO now its time to apply Kaikaku again.

Posted in Agile, Community, post modern agile | No Comments »

Tuesday, February 1st, 2011

As more and more companies are moving to the Cloud, they want their latest, greatest software features to be available to their users as quickly as they are built. However there are several issues blocking them from moving ahead.

One key issue is the massive amount of time it takes for someone to certify that the new feature is indeed working as expected and also to assure that the rest of the features will continuing to work. In spite of this long waiting cycle, we still cannot assure that our software will not have any issues. In fact, many times our assumptions about the user’s needs or behavior might itself be wrong. But this long testing cycle only helps us validate that our assumptions works as assumed.

How can we break out of this rut & get thin slices of our features in front of our users to validate our assumptions early?

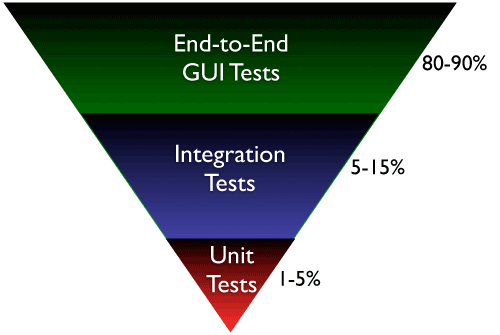

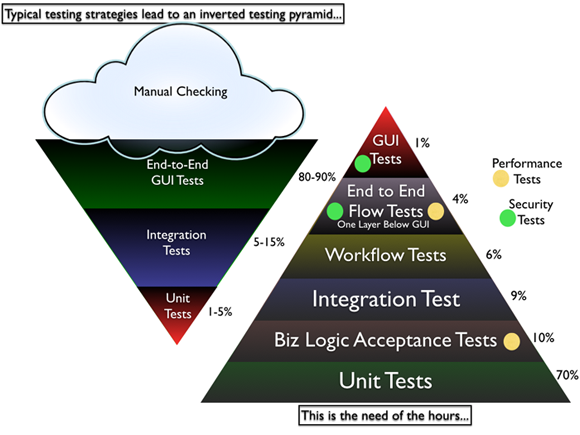

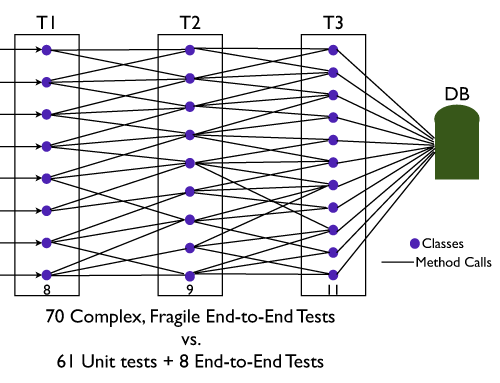

Most software organizations today suffer from what I call, the “Inverted Testing Pyramid” problem. They spend maximum time and effort manually checking software. Some invest in automation, but mostly building slow, complex, fragile end-to-end GUI test. Very little effort is spent on building a solid foundation of unit & acceptance tests.

This over-investment in end-to-end tests is a slippery slope. Once you start on this path, you end up investing even more time & effort on testing which gives you diminishing returns.

They end up with majority (80-90%) of their tests being end-to-end GUI tests. Some effort is spent on writing so-called “Integration test” (typically 5-15%.) Resulting in a shocking 1-5% of their tests being unit/micro tests.

Why is this a problem?

- The base of the pyramid is constructed from end-to-end GUI test, which are famous for their fragility and complexity. A small pixel change in the location of a UI component can result in test failure. GUI tests are also very time-sensitive, sometimes resulting in random failure (false-negative.)

- To make matters worst, most teams struggle automating their end-to-end tests early on, which results in huge amount of time spent in manual regression testing. Its quite common to find test teams struggling to catch up with development. This lag causes many other hard-development problems.

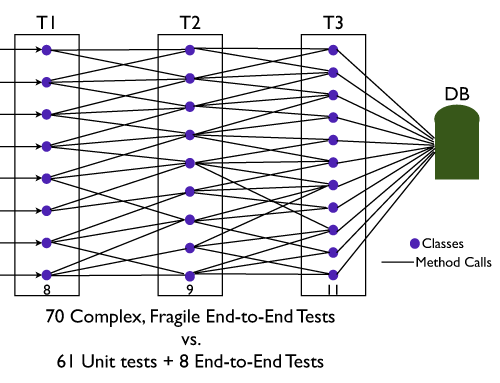

- Number of end-to-end tests required to get a good coverage is much higher and more complex than the number of unit tests + selected end-to-end tests required. (BEWARE: Don’t be Seduced by Code Coverage Numbers)

- Maintain a large number of end-to-end tests is quite a nightmare for teams. Following are some core issues with end-to-end tests:

- It requires deep domain knowledge and high technical skills to write quality end-to-end tests.

- They take a lot of time to execute.

- They are relatively resource intensive.

- Testing negative paths in end-to-end tests is very difficult (or impossible) compared to lower level tests.

- When an end-to-end test fails, we don’t get pin-pointed feedback about what went wrong.

- They are more tightly coupled with the environment and have external dependencies, hence fragile. Slight changes to the environment can cause the tests to fail. (false-negative.)

- From a refactoring point of view, they don’t give the same comfort feeling to developers as unit tests can give.

Again don’t get me wrong. I’m not suggesting end-to-end integration tests are a scam. I certainly think they have a place and time.

Imagine, an automobile company building an automobile without testing/checking the bolts, nuts all the way up to the engine, transmission, breaks, etc. And then just assembling the whole thing somehow and asking you to drive it. Would you test drive that automobile? But you will see many software companies using this approach to building software.

What I propose and help many organizations achieve is the right balance of end-to-end tests, acceptance tests and unit tests. I call this “Inverting the Testing Pyramid.” [Inspired by Jonathan Wilson’s book called Inverting The Pyramid: The History Of Football Tactics].

In a later blog post I can quickly highlight various tactics used to invert the pyramid.

Update: I recently came across Alister Scott’s blog on Introducing the software testing ice-cream cone (anti-pattern). Strongly suggest you read it.

Posted in Agile, Design, Testing | 14 Comments »

Friday, December 10th, 2010

Have an idea? Want to challenge some Agile practices? Like to share your experience?

Lightning talks, is your opportunity to share your ideas or experiences in less than 10 minute.

Send your talk proposal to [email protected] before Jan 7th 2011.

More about the conference: http://xp2011.org/

Posted in Agile, Community, Conference | No Comments »

Friday, November 26th, 2010

I hear many people claim Agile is just common sense. When I hear that, I feel, these guys are way smarter than me or they don’t really understand Agile or they are plain lying.

When I first read about test-first programming, I fell off the chair laughing, I thought it was some kind of a joke. “How the heck can I write automated tests, even without knowing what my code would look like”. You think TDD is common sense?

From traditional methods, when I first moved to monthly iterations/sprints, we were struggling to finish what we signed up for in a month. Its but natural to consider extending the time. Also you realize half day of planning is not sufficient, there are lot of changes that come mid-sprint. The logical way to address this problem is to extend the iteration/sprint duration, add more people and to spend more time planning to make sure you’ve considered all scenarios. But to nobody’s surprise but your’s spending more time does not help (in fact makes things worse). In the moments of desperation, you propose to reduce the sprint duration to half, may be even 1/4. Surprisingly this works way better. Logical right?

And what did you think of Pair Programming? Its obvious right, that 2 developers working together on the same machine will produce better quality software faster?

What about continuous integration? Integrating once a week/month is such a nightmare, that you want us to go through that many times a day? But of course its common sense that it would be better.

How about showing working software demos weekly/monthly somehow magically improving collaboration and trust. Intuitive? And also shipping small increments of software frequently to avoid rework and get fast feedback?

One after another we can list each practice (esp.the most powerful ones) and you’ll see why Agile is counter-intuitive (at least to me in early 2000 when I stumbled upon it).

Posted in Agile | 1 Comment »

|